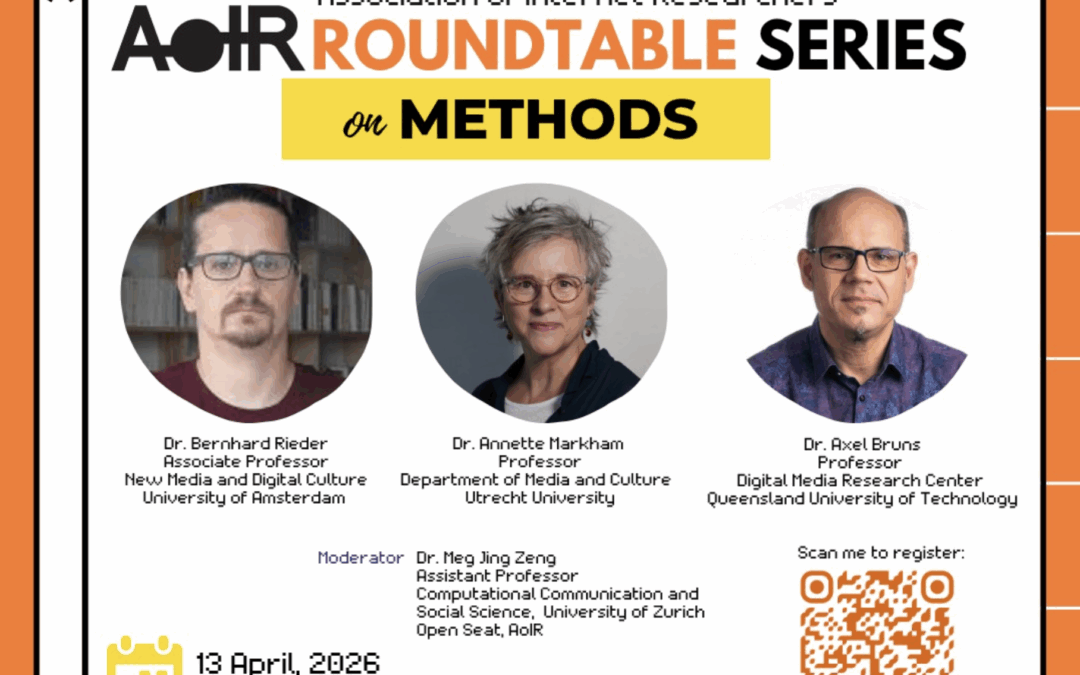

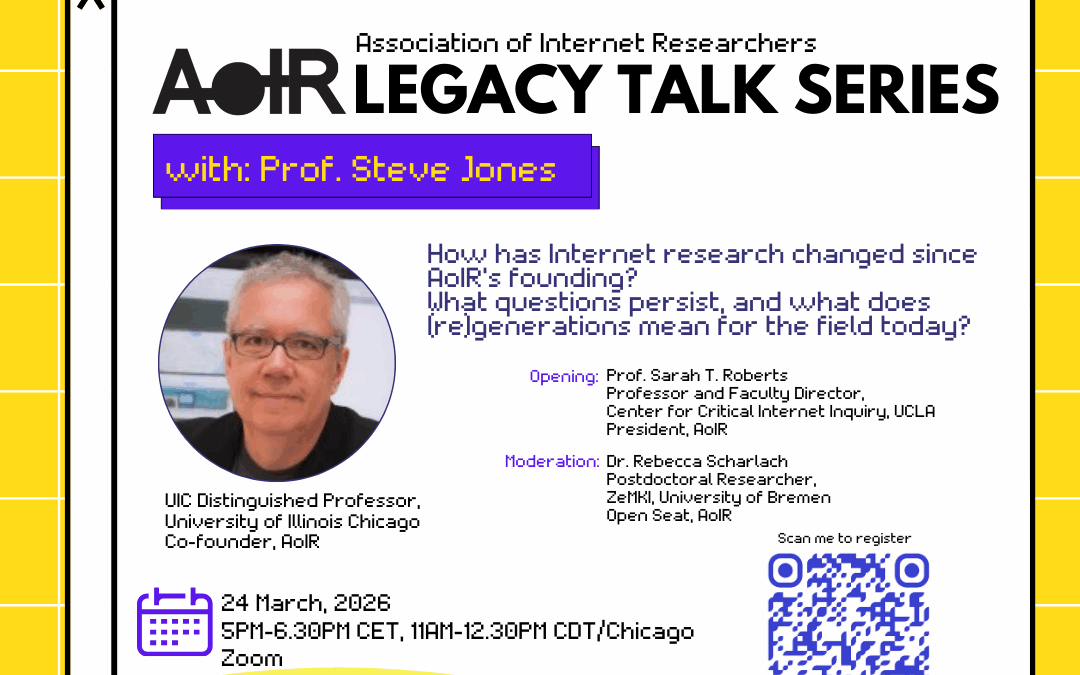

AoIR is happy to announce the first roundtable in our lecture series: Methods: How Internet Research Has Evolved. When: 13 Apr 2026 @ 18:00 - 19:30 (CEST) Location: Zoom - Link provided upon registration. We bring together long-standing AoIR members Annette N....